Preamble

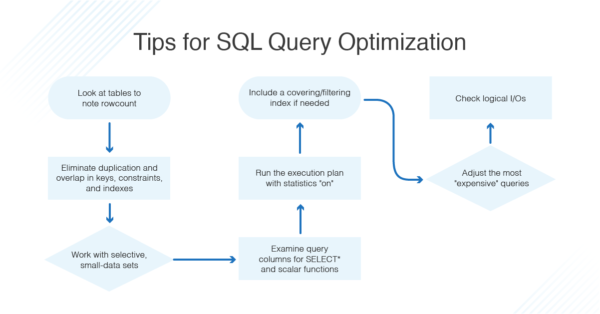

15 simple tips for action that will help you learn to write the right queries in SQL:

Optimization of tables

Necessary when many changes have been made to a table: either removed most of the data, or a lot of changes with strings of variable length – text, varchar, blob. The point is that the deleted records continue to be supported in the index file, and the subsequent insertion of new records uses positions of the old records. To defragment a file with data, the OPTIMIZE command is used.

OPTIMIZE TABLE `table1`, `table2`

Do not forget that during optimization, access to the table is blocked.

Restructuring data in the table

After frequent changes in the table, this command can improve the performance of working with data. It rearranges them in the table and sorts them by a certain field.

ALTER TABLE `table1` ORDER BY `id

Data type

It is better not to index fields that have string type, especially fields of TEXT type. For tables whose data change frequently, it is desirable to avoid using fields of the VARCHAR and BLOB types, as this type creates a dynamic string length, thus increasing the time of data access. In this case, it is recommended to use the VARCHAR field instead of TEXT, as it is faster to work with.

NOT NULL and the field by default

It is best to mark the fields as NOT NULL, as they save a little space and exclude unnecessary checks. At the same time, you should set the default field value and insert new data only if they differ from it. This will speed up the addition of data and reduce the time for table analysis. And you should keep in mind that BLOB and TEXT field types cannot contain default values.

Permanent connection to the database server

Allows you to avoid wasting time on reconnecting. However, it should be remembered that the server may have a limit on the number of connections, and if the site traffic is very high, the permanent connection can play a bad joke.

Data separation

Long not key fields advised to separate in a separate table if the original table is a constant sample of data and which changes frequently. This method will reduce the size of the variable part of the table, which will reduce the search for information.

It is especially relevant in cases when part of information in the table is intended for reading only, and the other part – not only for reading, but also for modification (do not forget that when you write information the whole table is blocked). A vivid example is attendance counter.

There is a table (name first) with fields id, content, shows. The first key with auto_increment, the second – text, and the third numerical – counts the number of hits. Each time you load a page, the last field is added +1. Let’s separate the last field into the second table. So, the first table (first) will be with the fields id, content, and the second (second) with the fields shows and first_id. The first field is clear, the second I think, too – referred to the key field id from the first table.

Now constant updates will take place in the second table. It is better to change the number of visits not programmatically, but through a query:

UPDATE second SET shows=shows+1 WHERE first_id=necessary_id

And the sample will be a complicated request, but one, two is not necessary:

SELECT first.id, first.content, second.first_id, second.shows FROM second INNER JOIN first ON (first.id = second.first_id)

It is worth remembering that this is not very relevant for sites with low attendance and little information.

Field names

For example, two tables are linked, preferably with the same name. Then simultaneous reception of the information from different tables through one inquiry will occur faster. For example, from the previous point, it is desirable that in the second table the field be named not first_id, but simply id, similar to the first table. However, if the name is the same, it is not very clear what, where and how. So the advice is for the amateur.

Require less data

If possible, avoid type requests:

SELECT * FROM `table1'

A query is not effective because it most likely returns more data than is necessary for the job. Optionally, the design is better:

SELECT id, name FROM table1 ORDER BY id LIMIT 25

Immediately I will make an addition about the desirability of using LIMIT. This command limits the number of lines returned by the request. That is, the request becomes “lighter”; and more productive.

- If LIMIT is 10, then after receiving ten lines the request is interrupted.

- If ORDER BY sorting is used in the query, it does not occur for the whole table, but only for the sample.

- If LIMIT is used in conjunction with DISTINCT, then the query will be aborted after the specified number of unique rows is found.

- If you use LIMIT 0, an empty value will be returned (sometimes needed to determine the field type or just to check the query).

Limit the use of DISTINCT

This command excludes repetitive strings as a result. This command requires an increased processing time. It is best to combine with LIMIT.

There is a little trick. If you want to view two tables on the subject of a match, this command will stop as soon as the first match is found.

SELECT DISTINCT table1.content FROM table1, table2 WHERE table1.content = table2.content

Do not forget about temporary HEAP tables

Although the table has limitations, it is convenient to store intermediate data in the table, especially when you need to make another sample from the table without reusing it. The point is that this table is stored in memory and therefore access to it is very fast.

Search by template

It depends on the size of the field and if you reduce the size from 400 bytes to 300, the search time is reduced by 25%.

Command LOAD DATA INFILE

Allows you to quickly upload large amounts of data from a text file

Storing images in the database is not desirable

It is better to store them in a folder on the server, and in the database to store the full path to them. The fact that the web server caches graphics files better than the content of the database, which means that when you subsequently access the image, it will display faster.

Maximum number of requests during page generation

I think there should be no more than 20 (+- 5 requests). At the same time, it should not depend on variable parameters.

About Enteros

Enteros offers a patented database performance management SaaS platform. It proactively identifies root causes of complex business-impacting database scalability and performance issues across a growing number of clouds, RDBMS, NoSQL, and machine learning database platforms.

The views expressed on this blog are those of the author and do not necessarily reflect the opinions of Enteros Inc. This blog may contain links to the content of third-party sites. By providing such links, Enteros Inc. does not adopt, guarantee, approve, or endorse the information, views, or products available on such sites.

Are you interested in writing for Enteros’ Blog? Please send us a pitch!

RELATED POSTS

How to Drive Intelligent Cloud Governance with Enteros Database Management Platform and AIOps

- 22 May 2026

- Database Performance Management

Introduction Cloud computing has become the foundation of modern digital transformation. Organizations across industries increasingly rely on cloud-native infrastructures, distributed applications, AI-driven services, and real-time analytics platforms to support innovation, scalability, and operational agility. Today’s enterprises operate highly complex cloud ecosystems that support: Business-critical applications Database environments Customer engagement platforms AI and machine learning workloads … Continue reading “How to Drive Intelligent Cloud Governance with Enteros Database Management Platform and AIOps”

How to Enhance Media Growth Strategies with Enteros Generative AI and Cost Management Analytics

Introduction The media industry is undergoing rapid transformation as organizations embrace digital platforms, cloud-native infrastructures, streaming technologies, AI-driven content strategies, and real-time audience analytics. Modern media companies must manage increasingly complex operational ecosystems while delivering personalized, high-quality experiences across multiple digital channels. Today’s media organizations rely heavily on digital technologies to support: Streaming platforms Content … Continue reading “How to Enhance Media Growth Strategies with Enteros Generative AI and Cost Management Analytics”

Enhancing Digital Banking Performance and Scalability with AI-Driven Database Analytics

- 21 May 2026

- Database Performance Management

Introduction Digital banking has transformed the global financial landscape. Customers now expect instant account access, real-time transactions, personalized financial services, and seamless digital experiences across mobile and web platforms. To meet these expectations, banks and financial institutions rely heavily on high-performance data infrastructure powered by complex database environments. Every digital banking operation—whether it involves payments, … Continue reading “Enhancing Digital Banking Performance and Scalability with AI-Driven Database Analytics”

How Intelligent Database Analytics Improves Performance and Reliability in Modern Healthcare Platforms

Introduction Healthcare organizations today operate in an increasingly data-driven environment. Hospitals, clinics, diagnostic centers, telemedicine platforms, and healthcare networks rely heavily on digital systems to manage patient records, medical imaging, billing systems, analytics platforms, and clinical workflows. At the center of these operations lies a complex healthcare data infrastructure powered by databases. These databases process … Continue reading “How Intelligent Database Analytics Improves Performance and Reliability in Modern Healthcare Platforms”